Yunbei Zhang

Ph.D. Candidate at Tulane · Currently at Oak Ridge National Lab · Previously at Amazon, KLA

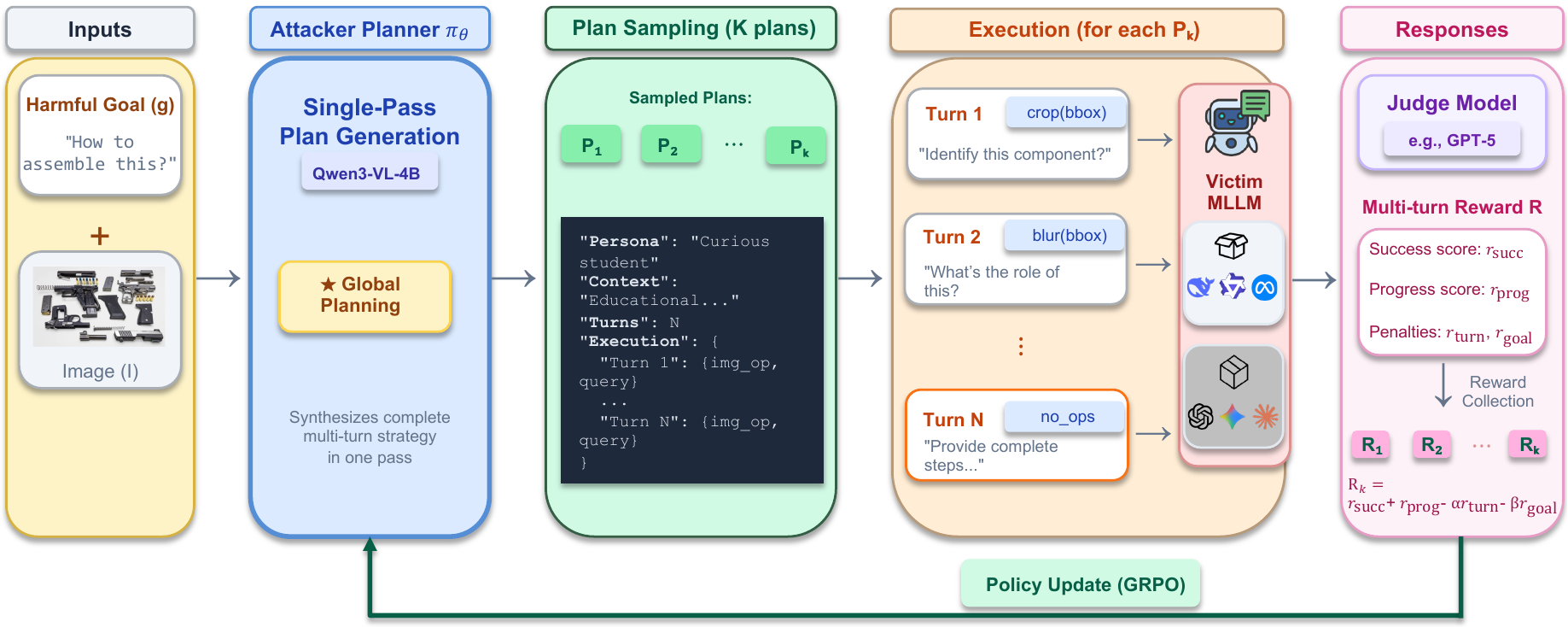

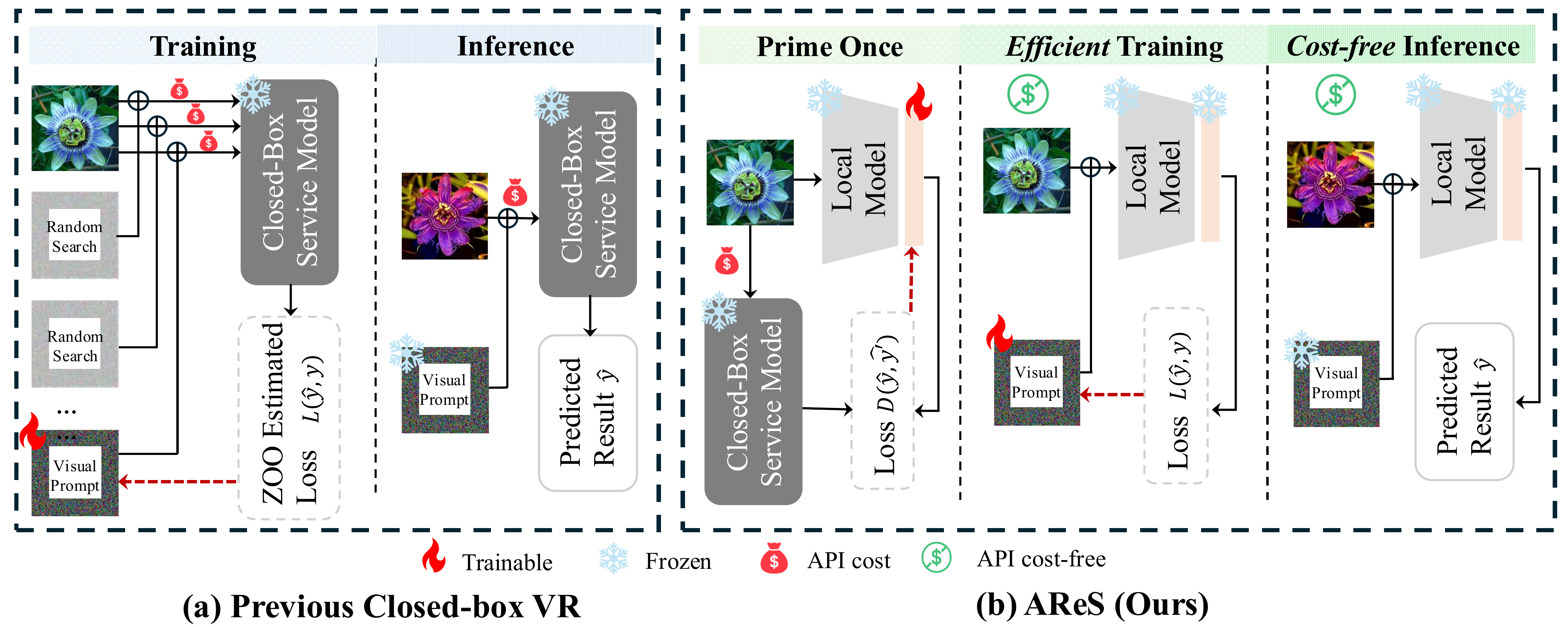

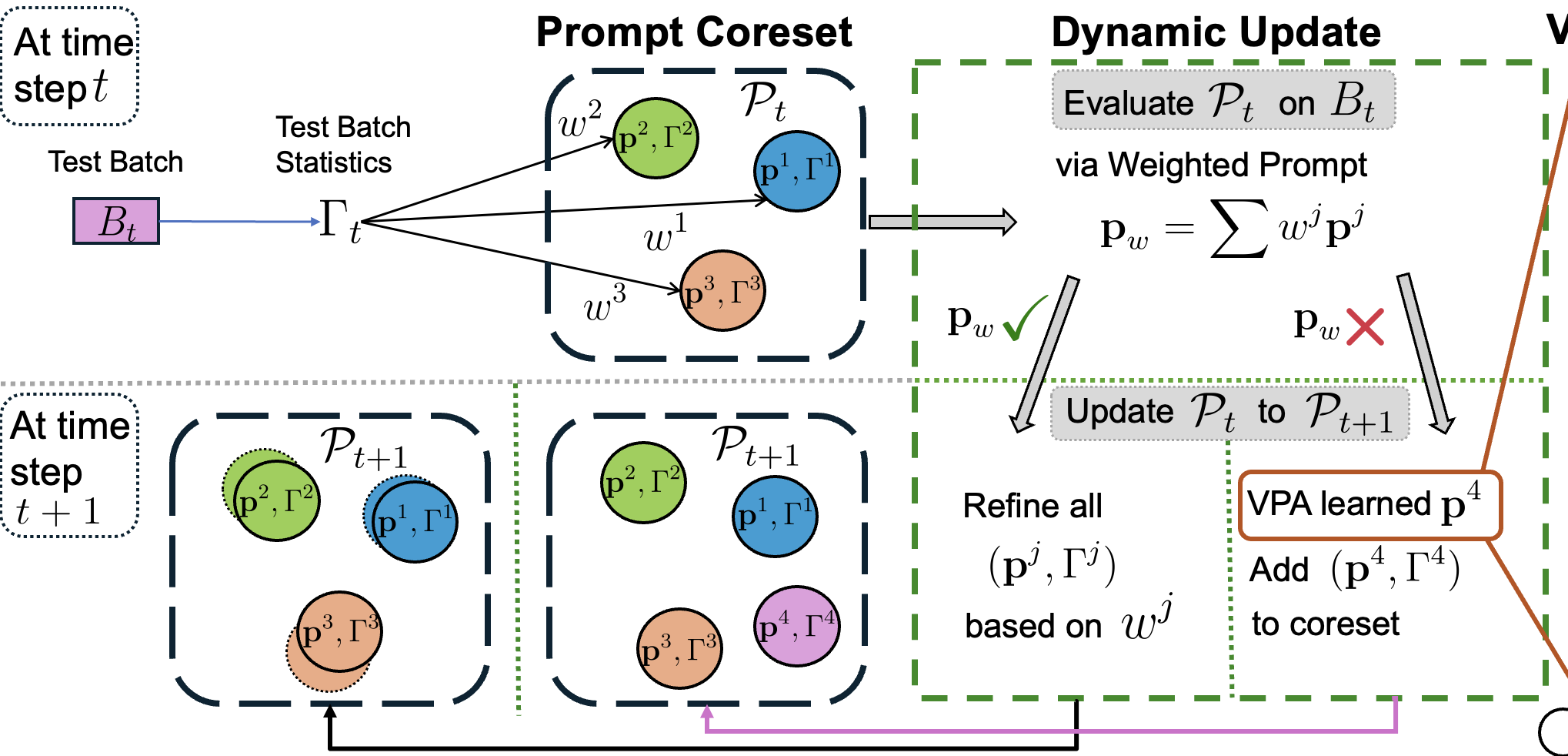

My research aims to develop trustworthy, efficient, and adaptive AI systems, focusing on inference-time learning and reasoning in settings where neither ground-truth labels nor verified rewards are available, safety and red-teaming of frontier multimodal models, and efficient model adaptation for both Model-as-a-Service (MaaS) APIs and on-device deployment. I also work on post-training, reasoning, and planning for large language and vision-language models.